|

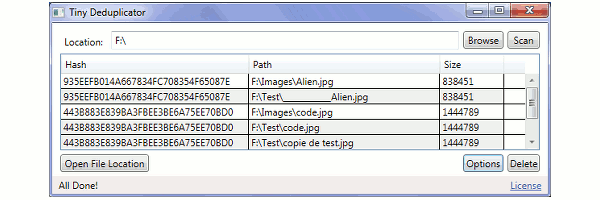

In practice, if messages are received multiple times on average, memory use will generally not exceed a fraction of the full peak. NotesĪctual memory use depends on the number of unique messages being stored, not on the thruput. See /test for template applications that fulfill these requirements and simulate fast & slow scenarios. Users need to handle all of the following events: Note: The Deduplicator extends EventEmitter.

Contact me if you have questions about this. Capping memory at 512mb allows thruput of 3 million daily unique events, which is much higher than the intended size for this use case. Works well for data sets with hundreds or thousands of unique daily events, but with many occurrences of each of those events. Stream your daily logs thru the deduplicator to get a condensed view of which unique events recently occurred. Getting on-the-fly notifications of unique events. Var DeDuplicator = require('deduplicator') For multiples of these figures, consider using PM2 to run multiple instances of deduplicator (effectively leveraging multiple CPU cores). Setting the lifetime at 60 seconds is a safe window to prevent duplicates, peak memory consumption will be capped at below 520mb, and the system can process 50,000 messages per second. In healthcare, for instance, it is uncommon for duplicates to occur over the MLLP protocol outside of the span of a few seconds. In this use case, the lifetime can likely be low. lots of duplicate messages are sent at high speeds in telecom, healthcare, finance.

Removing duplicate messages from low-level protocol communications. See below for example use cases and their respective memory consumptions. If duplicates are expected to be received in long windows of time, the lifetime must reflect this. After the lifetime expires, receipt of a new message Y that is equal to X will not be considered a duplicate (Receipt of Y will prevent any duplicates of Y for lifetime seconds). The first receipt of a given message X will be held in memory for 'lifetime' seconds, and during that time any duplicates of X will be detectable. + Single core (multiply memory by # of instances):ĭeduplicator accepts one integer as input - the lifetime of messages, in seconds. Npm install deduplicator Recomended Redis Configuration: + Redis must be configured for predictable behavior.

Used in production by Scalabull to eliminate duplicate patient records on-the-fly. By using efficient hashing and factoring-in message lifetimes, this module can tackle most deduplication scenarios with basic hardware. Works effectively in both high-speed, real-time scenarios (speed capped at around 50,000 msg/sec per NodeJS Thread), and in lightweight settings (memory and CPU consumption is low if the load is low).ĭesigned to prevent a need for more complex deduplication approaches (e.g. A glorified Redis hash for removing duplicate messages from streams of data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed